Where do all these calculations take place when AI generates those remarkable results? AI that answers questions, creates images, and recognizes speech seems to work instantly before our eyes. But there’s an infrastructure operating behind the scenes.

The place where countless computations and massive amounts of data are processed is called a data center. Data centers have become more than just IT equipment—they’re the invisible heart that powers the AI era we live in.

What is a data center?

A data center is a large-scale IT infrastructure that houses countless servers, storage systems for large volumes of data, and network devices for communication between servers and external connections.

The digital requests we make every day are sent to high-performance computers—servers—located somewhere in the world. These requests aren’t processed by a single server. They go through distributed processing across multiple servers, each playing a different role. Storage and networking are configured together so that these servers can reliably perform computations and exchange data. The entire system is housed in one place for centralized operation—this is what we call a data center.

Data centers are where images and videos are safely stored and managed, servers perform computations simultaneously, and AI models are trained and deployed. The reason we can reliably save pictures, run searches, shop, make payments, get directions, and access AI services is because data centers serve as the physical foundation for all of it.

Why data centers matter more than ever

The reason data centers are in the spotlight is AI. Before AI adoption, CPU-based servers were at the center of data centers. CPUs alone were enough to run web services and handle general data processing.

But the emergence of AI changed everything. AI computations require high-performance accelerators like GPUs, which consume far more energy and generate far more heat than anything before. Traditional data centers, built around conventional cooling and power structures, simply couldn’t handle this computational load.

Today, data centers are no longer just places to store servers—they’re closer to factories where high-density computations run nonstop. As computation density rises and heat increases dramatically, AI-ready data centers that can reliably support these demands have become essential. This shift is the biggest reason data centers are receiving renewed attention.

TEAM NAVER’s GAK data centers

As more people come online and digital services expand, the more critical data centers become. With this in mind, TEAM NAVER operates its own data centers, called GAK, managing core infrastructure directly. The name GAK comes from Janggyeonggak, the hall that houses the Tripitaka Koreana—the most complete collection of Buddhist texts and one of Korea’s most treasured cultural heritage artifacts. The name reflects our commitment to safeguarding users’ valuable data and serving as a foundation for future growth.

We handle everything ourselves—from design and planning to construction and operation. This approach goes beyond operational efficiency; it allows us to systematically build the infrastructure design and operational capabilities essential for the AI era.

We currently operate data centers in two regions—Chuncheon and Sejong. These facilities represent years of accumulated expertise and proprietary technology.

GAK Sejong: Hyperscale AI data center

GAK Sejong, in particular, is a hyperscale data center built for AI, with the infrastructure to run large-scale GPU clusters reliably. Simply mounting GPUs on servers isn’t enough for strong performance. Reliable AI computation is only possible when power supply, cooling, and networking all work together.

We were also the first in the world to deploy NVIDIA’s supercomputing infrastructure, SuperPOD, in a real-world production environment. Through this experience, we’ve built the technical capabilities to design and run large-scale GPU clusters, and to optimize core data center infrastructure—cooling, power, and networking—for AI workloads.

Our goal isn’t just to acquire large numbers of GPUs, but to strengthen our AI infrastructure competitiveness through reliable, efficient operation.

Data center core technologies

In the AI era, data centers aren’t simply places to store equipment—they’re highly advanced systems that handle both massive computation and the heat it generates. Let’s look at the core technologies that keep AI data centers running reliably.

Cooling technology: The lifeline of data centers

Cooling is one of the most critical factors in data center operation. When servers run at full capacity, internal temperatures rise quickly—and if cooling fails, the entire system is at risk. Large-scale, GPU-based AI environments generate especially high heat density, making efficient cooling technology essential to AI data center design.

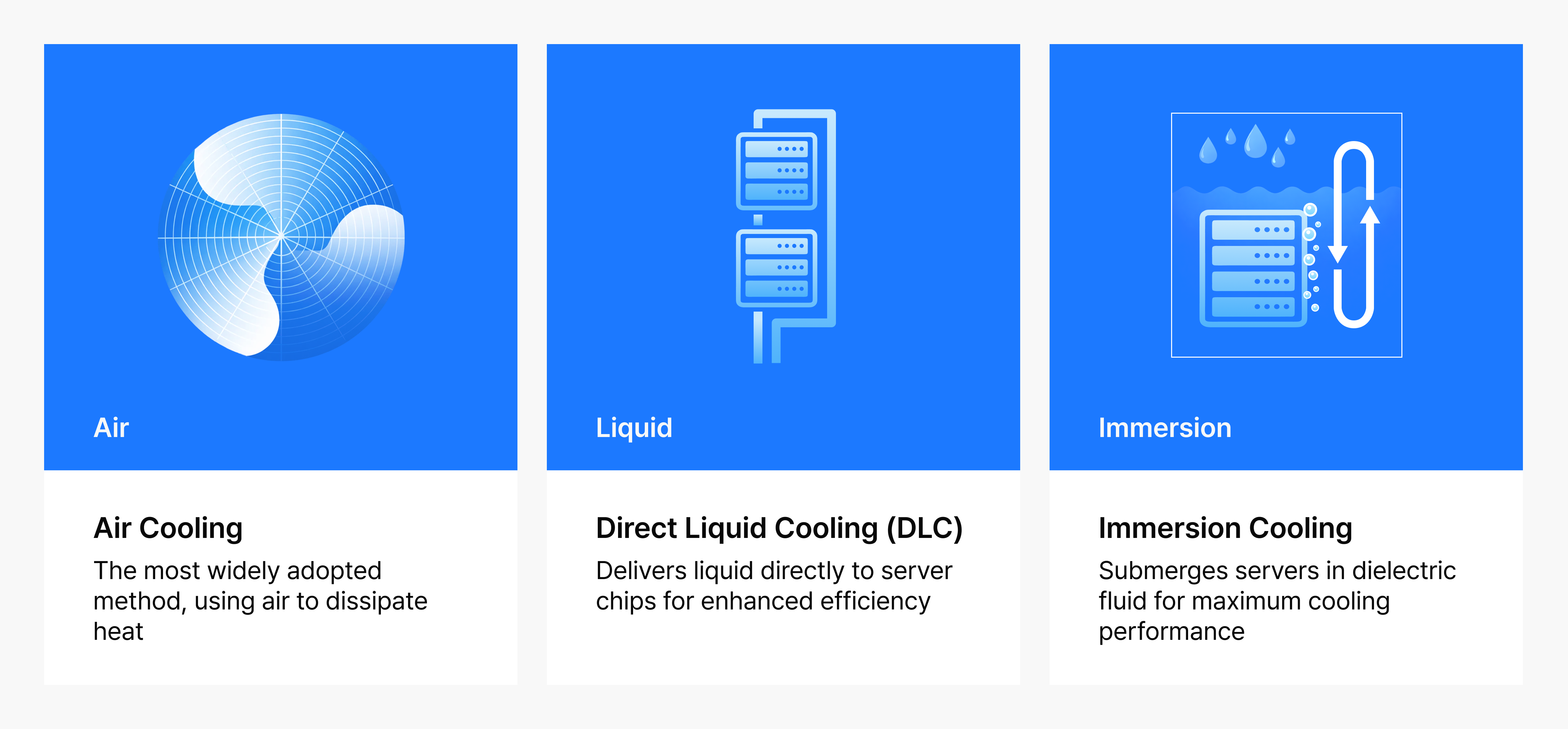

Cooling technologies can be broadly categorized by the medium used to dissipate heat from servers.

Air cooling

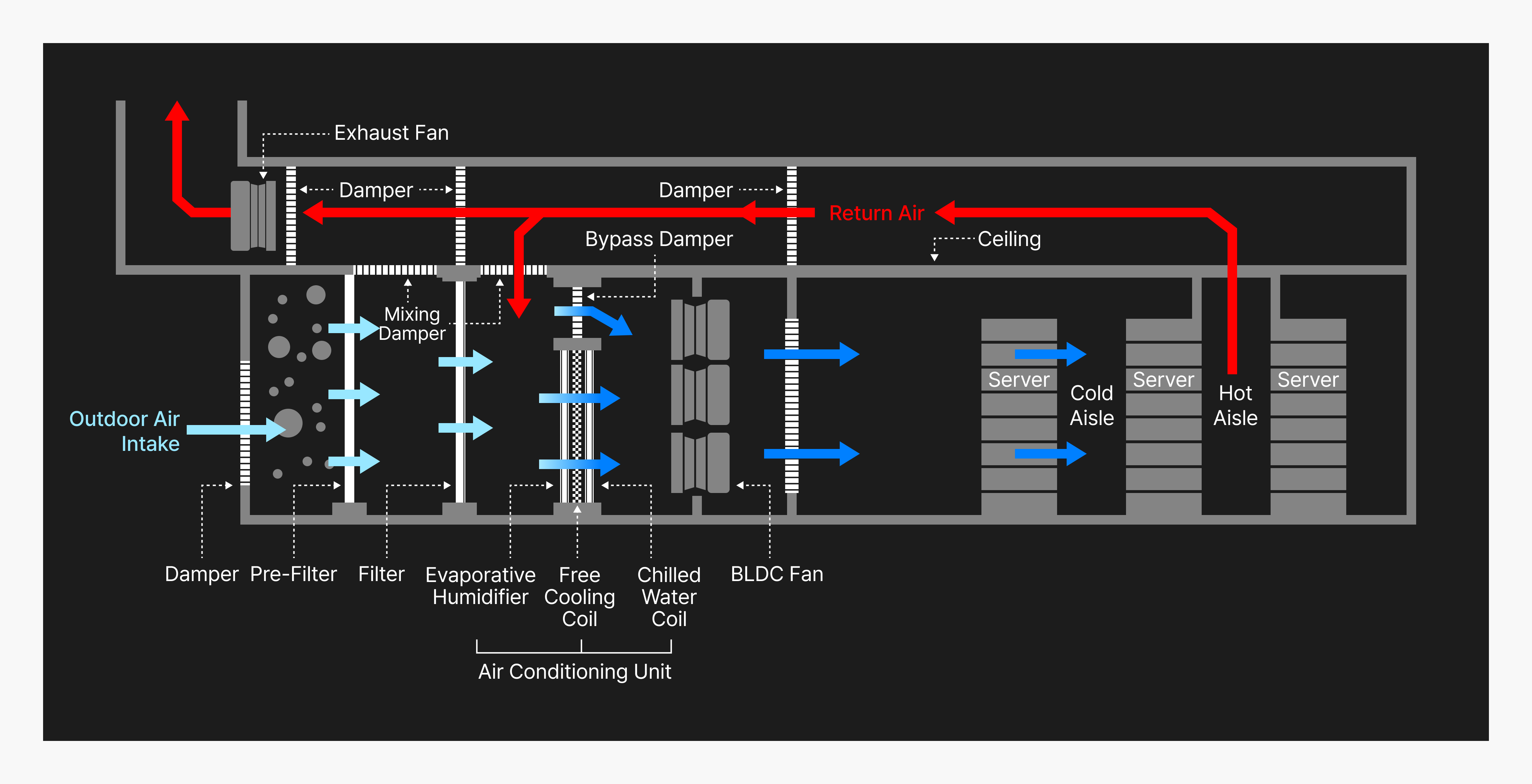

Air cooling uses an air handling unit (AHU) system—composed of outdoor units or cold source equipment like chillers and cooling towers, along with fans—to cool the hot exhaust air discharged from servers. This structure is relatively simple and has long been the industry standard.

But in GPU-based AI infrastructure, power density and heat density per server rack have increased rapidly. Air’s low heat capacity and heat transfer coefficient make it harder to keep up, leading to greater temperature gaps between supply and return air, thermal hotspots, and higher fan power consumption. Air cooling is reaching its physical and structural limits—impacting both cooling efficiency and power usage effectiveness (PUE).

Liquid cooling

To overcome these limitations, cooling technology is shifting from air to liquid, using water or other fluids to remove heat.

- Direct liquid cooling: Cooling water circulates through piping installed inside the server, removing heat directly from components.

- Immersion cooling: The entire server is submerged in a special non-conductive liquid. Because liquid has higher thermal conductivity than air, heat is removed much more rapidly.

Liquid cooling does come with trade-offs: additional piping and cooling systems increase upfront costs, and both design and operation require more sophisticated management.

Figure 1. Data center cooling methods

To maximize cooling efficiency, TEAM NAVER developed a proprietary hybrid air handling unit called NAMU (NAVER Air Membrane Unit). NAMU leverages natural outdoor air while filtering out fine dust and other pollutants—reducing power consumption and improving energy efficiency.

Figure 2. How NAMU III works

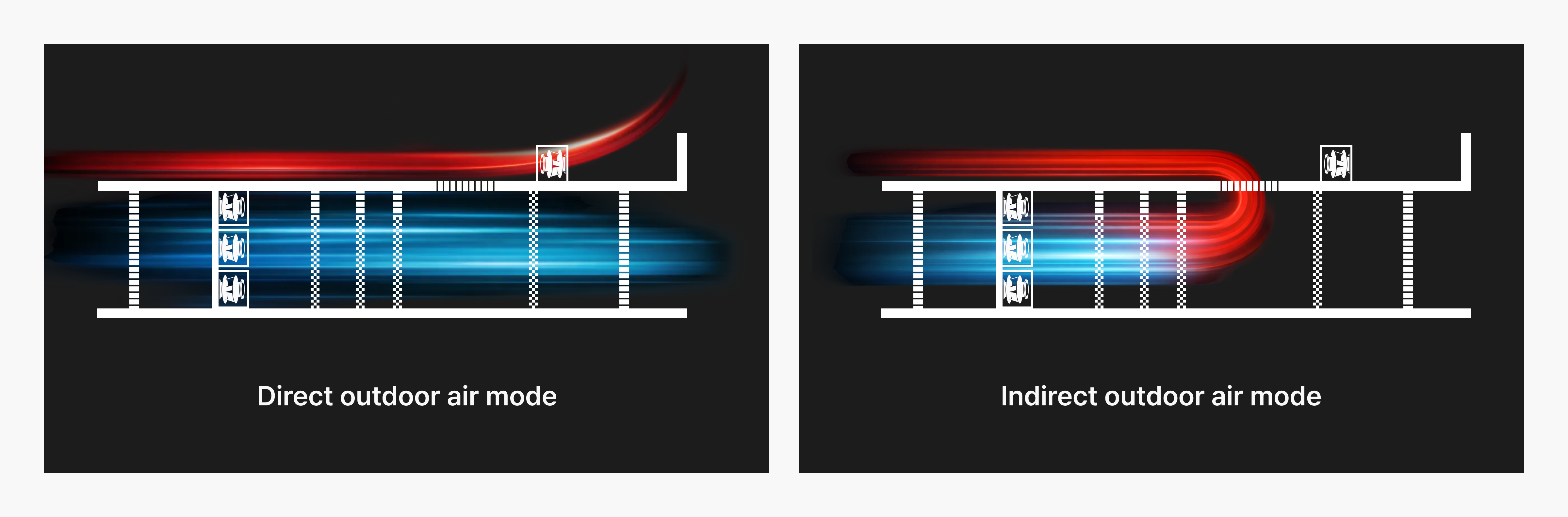

NAMU III, installed at GAK Sejong, uses both direct outside air cooling and indirect outside air cooling, which draws on outdoor air as a heat source. This allows for reliable operation year-round by minimizing the impact of seasonal and environmental conditions. It’s a highly energy-efficient, environmentally friendly cooling technology that flexibly applies the most efficient method based on conditions.

Figure 3. NAMU III: direct free cooling mode (left), Indirect free cooling mode (right)

Thanks to this design, TEAM NAVER’s GAK data centers maintain a power usage effectiveness (PUE) of around 1.1x. PUE is a key metric for data center energy efficiency—the closer to 1, the better. With the global average sitting at around 1.58, our data centers are achieving exceptionally high efficiency.

Power stability: Ensuring uninterrupted operation

Data centers can’t afford a power outage—not even for a second. If power fails, services like search, payments, and storage could all go down at once. This is why data centers have multiple backup power sources: UPS systems, large-capacity energy storage, and emergency generators.

A UPS (Uninterruptible Power Supply) kicks in instantly when power is cut or voltage drops momentarily, using internal batteries or energy storage devices to keep core IT equipment—servers, networks, storage—running without interruption. If grid power from Korea Electric Power Corporation is cut or an internal shutdown occurs, emergency generators start automatically within seconds, maintaining power until a stable supply is restored.

Integrated control system: Managing it all together

In AI data centers, thousands of servers run alongside cooling, power, and security systems—all at once. Rather than managing each component separately, an integrated control system is essential for monitoring the entire operation at a glance and responding to issues instantly.

This kind of system brings together servers, power, cooling, fire safety, equipment, and security into a single view, detecting early signs of failure and resolving them quickly. This minimizes service interruptions and ensures reliable operation. As AI data centers grow larger and more complex, centralized infrastructure management is increasingly becoming a key factor in competitiveness.

Figure 4. GAK Sejong data center control center

Conclusion

In the AI era, data centers are more than buildings—operating them is closer to running a city where thousands of electronic brains work simultaneously. As AI becomes widespread, demand for high-performance hardware like GPUs is increasing dramatically, and infrastructure requirements for cooling and power have become more critical than ever.

As AI grows larger and faster, data centers must evolve to be larger, smarter, and more sustainable. With hyperscale data centers becoming the norm, competition is shifting from simply owning data centers to operating them efficiently and reliably for maximum AI performance.

Future competitiveness will depend on how quickly we can adopt energy-efficient cooling technologies, leverage renewable energy, and build robust power, control, and fire protection systems. The data centers that lead the industry will be those that reliably handle AI workloads, achieve best-in-class energy efficiency and sustainability, and have the capability to design and optimize their own power, cooling, and network infrastructure.

AI is a technology that opens new possibilities. But with it comes the responsibility to reliably support the massive computations and data it demands. Data centers—where data is stored and processed nonstop—are like the heart that sustains the human body. As a foundation essential to the AI era, they’ll continue to play a vital role in connecting the world and shaping the future.

Learn more in KBS N Series, AI Topia, episode 9

You can see these concepts in action in the ninth episode of KBS N Series’ AI Topia, “Data centers: The beating heart of the AI era.” Roh Sang Min, Head of Data Center Technology at NAVER Cloud, breaks down these ideas with clear examples and helpful context. It’s a great way to get a fuller picture of the topics covered in this post.